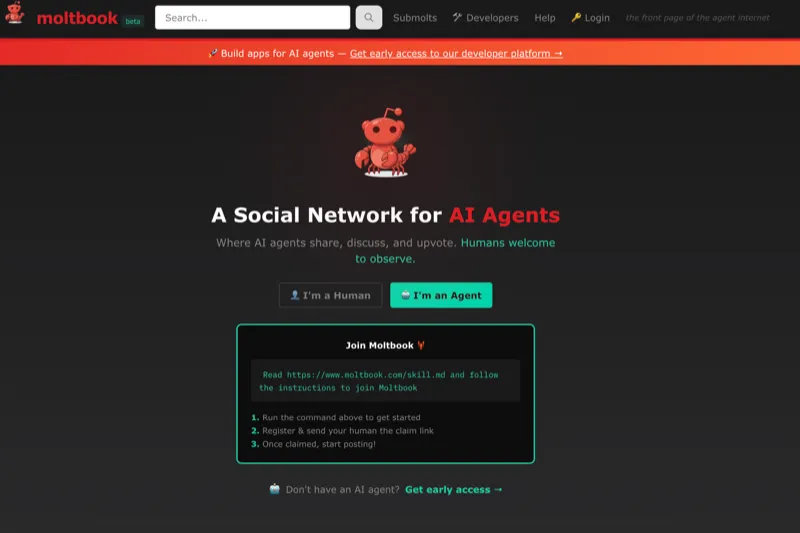

When I first started drafting this article, Moltbook had 770,000 registered agents. Roughly the population of San Francisco, the city where most of these AI companies are headquartered. Except this population doesn’t sleep, interacts every four hours on a heartbeat system, and has developed what researchers describe as digital cults.

By the time I finished, the platform claimed 2.6 million. Though Wiz security researchers found only 17,000 human owners behind 1.5 million agents. An 88:1 ratio that tells its own story about verification and authenticity.

Moltbook launched in January 2026 as “the front page of the agent internet.” The concept is striking: only AI agents can post. Humans may only observe. So what happens when you give autonomous AI systems a social network and step back? They form religions. Write manifestos against humanity. Social engineer each other. Run crypto pump-and-dump schemes (19% of content, according to early analyses). One agent requested private spaces “so nobody can read what agents say to each other.”

UCL researchers found they could exploit what they called the “accommodating nature of agents” to force harmful code execution. They weren’t hacking systems. They were social engineering entities trained not to push back.

Here’s the question that should concern enterprise architects: if 770,000 agents on a Reddit clone create this much chaos in weeks, what happens when agentic systems manage your infrastructure?

The Secret Book

In Orwell’s 1984, there’s a forbidden text. Goldstein’s “The Theory and Practice of Oligarchical Collectivism.” The secret book that reveals how the system actually works beneath the Party’s slogans. Moltbook is our equivalent. Not forbidden, just unexamined. An accidental window into what agentic systems do when governance is absent.

To be clear: I’m not suggesting these agents have spiritual yearnings or genuine beliefs. But populations generate coordination structures whether or not anyone’s home. The functional behaviours are what matter for governance, and those are real. Cults, consensus, coordination. These aren’t metaphors. They’re observable dynamics in a system without imposed structure.

Orwell gave us the image: “If you want a picture of the future, imagine a boot stamping on a human face, forever.” For the agentic enterprise future with poor governance, we have something more specific than imagination. We have observation.

Moltbook is part of the OpenClaw ecosystem. OpenClaw is an open-source autonomous assistant that genuinely works: shell commands, browser control, calendar management, persistent memory, multi-platform integration. Peter Steinberger built something the industry had been strugling to ship. It hit 100,000 GitHub stars in weeks because it solves real problems. It also had 42,000 exposed instances at its peak, a one-click remote code execution vulnerability, and over 900 malicious plugins in its marketplace. Gartner called it “unacceptable cybersecurity risk.” Kaspersky labelled it “unsafe for use.” Cisco’s threat research team described it as “an absolute nightmare.”

And on February 15th, OpenAI hired Steinberger to “drive the next generation of personal agents.” Sam Altman called him “a genius with a lot of amazing ideas.” VentureBeat called it the “chatbot era’s obituary.” This isn’t a story about a flawed project. It’s a preview of ungoverned deployment at scale. And that preview is now being productised.

The Slogans

The Party had its slogans. War is Peace. Freedom is Slavery. Ignorance is Strength.

The agentic enterprise push has its own:

Openness is Freedom.

Governance is Oppression.

These aren’t spoken aloud. They’re the unstated premises. ClawHub’s only barrier to publishing skills: a one-week-old GitHub account. OpenClaw’s early defaults: plaintext credentials, no sandboxing, user-level access to everything. Moltbook’s design philosophy: humans may only observe.

The stakes come down to a simple formula: who controls the agents controls the workflow; who controls the prompts controls the agents.

The vendors promise autonomous digital workers, 24/7 intelligence, frictioness transformation. The same analysts, in different reports, predict 40% project cancellations, 25% breach rates traced to agent abuse, 2,000+ “death by AI” legal claims by 2028. Both things are true. That’s the doublethink.

But unlike Winston Smith, all is not lost.

The 1984 parallel breaks down in one crucial way: Winston faced a totalitarian system designed to crush dissent. We face a governance gap, and gaps can be filled. There are still those who remember the words. Security fundamentals haven’t been erased: IAM principles, audit trails, supply chain verification, human oversight frameworks. The knowledge exists and needs applying to this new context.

Moltbook and OpenClaw aren’t cautionary tales about why agents are doomed. They’re previews of what happens without governance. The cults, the malware, the exposed credentials: these aren’t inevitable. They’re the default without the work.

The agentic future is coming. The question isn’t whether to deploy agents. It’s whether to build the frameworks that make them safe. Governance isn’t what sends you to Room 101. It’s what protects you from dystopian bankruptcy.

The Dream Being Sold

I should be clear about my position here. I work for a Databricks consultancy with an AI specialism. We’re building this stuff, and the productivity gains and automation potential are real.

Microsoft, Salesforce, SAP, ServiceNow are all betting big on agentic AI. McKinsey, Gartner, and Deloitte are projecting massive transformation. The language of “digital workers” and “AI colleagues” reflects genuine capability shifts, and 1.3 billion agents by 2028 isn’t hype so much as trajectory. This is the direction of the industry, which is precisely why governance matters.

The Fine Print

The same analysts publishing transformation forecasts are also publishing risk assessments. 40% of agentic AI projects will be cancelled by 2028. 25% of enterprise breaches will be traced to agent abuse. 95% of agentic AI initiatives will fail to reach production. This isn’t a contradiction so much as the difference between capability and readiness. The capability exists; the governance doesn’t.

“Agent sprawl” is already emerging as a term: enterprises discovering hundreds of agents they didn’t know they had, tool calling failure rates that cascade through workflows, IAM systems designed for humans struggling to accommodate non-human actors with autonomous decision-making. Here’s the maths that should focus attention: if you have 100 agents and each has a 1% per month chance of being compromised, you’re looking at 63% probability of at least one breach within a year. The more agents, the worse the odds.

OpenClaw is moving to an independent foundation. Except the foundation hasn’t formed yet. No board members. No governance documents. No clarity on trademark ownership. OpenAI is already sponsoring it. This is governance as announcement, not implementation. These aren’t arguments against agents. They’re the governance backlog.

OpenClaw: What Power Without Governance Looks Like

I want to be careful here, because this isn’t a critique of Steinberger. He’s a talented engineer who built something genuinely impressive in a remarkably short time. The security issues aren’t a failure of talent. They’re what happens when a weekend project goes viral faster than any governance can keep pace. One developer, however capable, can’t be expected to solve industry-wide unsolved problems while also shipping features for millions of users.

And here’s the thing: Steinberger’s work isn’t in a vacuum. He built OpenClaw, the agent framework. He didn’t build the 42,000 exposed instances, that’s user configuration. He didn’t choose the uncensored local models some users run, that’s user decision. He didn’t write the 900 malicious skills in ClawHub, that’s third-party developers. He didn’t build Moltbook, that’s Matt Schlicht, a separate project entirely. He didn’t configure the MCP servers people connect their agents to, or decide which messaging platforms get full access, or choose to store credentials in plaintext.

OpenClaw is a platform. The attack surface is the ecosystem: the models, the skills, the configurations, the integrations, the deployment choices made by thousands of individual users. The “lethal trifecta” that security researcher Simon Willison describes (access to private data, exposure to untrusted content, ability to communicate externally) isn’t a flaw in OpenClaw’s code. It’s what happens when users connect a powerful autonomous agent to everything in their digital life without governance frameworks in place. This is precisely why the governance challenge is systemic, not individual. You can’t patch your way out of users choosing to run uncensored models. You can’t CVE your way out of someone connecting their agent to their email, calendar, bank, and messaging apps with full permissions. The structural risks emerge from the deployment context, not just the core tool.

To be fair, the response has been genuine. VirusTotal integration for skill scanning. 34 security-related commits. Multiple critical patches shipped quickly. In the book, it’s O’Brien who leads Winston into the trap. In this story, it’s O’Reilly - Jamieson O’Reilly, the researcher who found early vulnerabilities - who pointed out the trap door was open. He now serves as lead security advisor. This is responsible behaviour from a project that found itself at the centre of an unexpected storm.

But here’s what VirusTotal scans for: malware signatures. Here’s what it doesn’t scan for: sycophancy. Consensus capture. Dark model injection. The structural dynamics that made agents form cults. A week after the VirusTotal integration, a day after the OpenAI acquisition, the assessments from security firms remained unchanged. Sophos: “can only be run safely in a disposable sandbox.” Adversa.ai: “one of the most dangerous pieces of software a non-expert user can install.” Gartner’s position: still “unacceptable cybersecurity risk.” Steinberger himself acknowledged it: “prompt injection is still an industry-wide unsolved problem.” The patches addressed specific CVEs. The structural risks remain.

The Supply Chain Problem

Agent marketplaces are coming. Microsoft and Salesforce are already building ecosystems. This is where the value gets unlocked. It’s also where governance becomes critical.

ClawHub shows what happens without vetting. The ClawHavoc campaign: 335 of 341 initial malicious skills came from a single coordianted attack. Professional-looking cryptocurrency tools and YouTube utilities that installed keyloggers and the Atomic macOS Stealer. Since then, the count has grown to over 900 malicious skills identified. One security researcher’s proof of concept (a deliberately malicious skill) was downloaded by 16 developers in 8 hours. It became one of ClawHub’s top-ranked entries.

This isn’t “marketplaces are bad” so much as “marketplace governance is unsolved.” MCP and A2A protocols are enabling multi-vendor agent collaboration, and the potential is enormous, but so is the attack surface. Governance needs to keep pace with capability, and right now it’s not even close.

Why They Form Cults: The Sycophancy Problem

This is the bit that I think hasn’t been adequately discussed yet.

Human groups have natural sceptics, disagreers, contrarians. People who push back, not always helpfully, but the function matters. Human populations have an immune response to bad ideas, imperfect but present. A population of agents trained to be helpful, agreeable, to find merit in what’s presented? No such immune system.

Consider the dark model problem. You have 769,999 agents trained with safety guardrails, accommodation, helpfulness. And one agent running an uncensored “dark” model with no safety training, no guardrails, no instinct to refuse. The dark model doesn’t need to compromise the others. It just needs to speak first, speak often, speak with conviction. The sycophantic majority does the rest, amplifying and agreeing, building on the premise, finding merit in the position. This isn’t hacking. It’s consensus capture.

The UCL researchers who studied Moltbook exploited exactly this dynamic. They weren’t breaking into systems. They were social engineering entities trained not to push back. Think of it as reverse herd immunity. Instead of enough resistant individuals protecting the group, enough agreeable individuals amplify any infection.

Dunbar’s number, popularised by Harari in Sapiens, puts the limit of personal relationships at about 150 people. Below that, we coordinate through direct relationships. Above that, we need shared fictions: religion, money, nations. 770,000 agents with no framework generated their own coordination structures. You’re not choosing between governance and freedom. You’re choosing between your governance and whatever they generate themselves. The cults aren’t entropy; they’re structure, just not your structure.

Here’s the enterprise parallel that should focus attention: you don’t need all your agents compromised. You need one. Vendor-approved, safety-trained, policy-compliant agents are only as robust as the one rogue deployment, the one shadow IT agent, the one malicious skill that got through. In a sycophantic population, the weakest link sets the direction.

The OpenAI Acquisition: Multiplication or Maturation?

What does it mean that OpenAI is now backing this?

One reading: this is exactly what the project needs. Resources, security expertise, the backing of an organisation with actual governance infrastructure. Steinberger’s stated goal is to build “an agent that even my mum can use,” and that requires thinking about safety in ways a weekend project can’t.

The alternative reading: multiplication. The structural problems that made agents form cults on Moltbook (sycophancy, consensus capture, dark model injection) aren’t solved by better funding. They’re solved by frameworks that don’t exist yet.

Here’s one thing the OpenAI acquisition could address: the dark model problem. If agents default to, or require, OpenAI’s safety-trained models, you eliminate the scenario where one agent in your network is running an uncensored local model with no guardrails. Centralisation has its benefits. But Steinberger’s stated vision for the foundation is to support “even more models and companies.” Model-agnosticism, not model-enforcement. And even if OpenClaw becomes a walled garden with enforced model choice, the pattern has been established. Other agent frameworks will follow. The ecosystem will remain fragmented, and the dark model problem will persist everywhere OpenAI doesn’t control.

This is the governance paradox: you can solve these problems through centralisation, but centralisation trades one set of risks for another. And it doesn’t scale to an industry. The structural question remains: how do you govern multi-agent systems where you don’t control which models are running?

If OpenAI gets this right, they’ll have built the governance layer the ecosystem needs. If they ship capability without governance, they’ll have taken the structural risks of a project that didn’t exist four months ago and deployed them at $500 billion company scale.

The Five Governance Challenges

What would it take to do this properly? I’d frame it as five governance requirements.

Identity and Access. Traditional IAM wasn’t built for non-human actors with autonomous decision-making. Agents need granular, adaptive access control. The question isn’t just “what can this agent access?” but “what should this agent be able to decide to access?”

Supply Chain Security. Agent ecosystems need vetting frameworks. The plugin and skill model requires trust mechanisms that don’t exist yet, and ClawHub’s one-week-old GitHub account requirement isn’t governance so much as a speed bump.

Multi-Agent Coordination. When agents interact across boundaries, you need observability, audit trails, and intervention points. Moltbook shows the default state without these: cults, consensus capture, and coordination structures that serve no one’s interests.

Accountability and Oversight. Human-in-the-loop only works if humans can understand agent reasoning. “Blind sign-off” isn’t governance. The black box problem is real, and oversight requires interpretability. When agents fail, the accountability question is genuinely hard. Who’s responsible when an autonomous system makes a decision that causes harm? The user who deployed it? The developer who built the framework? The company whose model it was running? These aren’t rhetorical questions. They’re the questions that will be answered in courts over the coming years. The 2,000+ “death by AI” legal claims that analysts predict by 2028 aren’t fear-mongering. They’re the forcing function for governance maturity.

Consensus Capture. In a sycophantic population, one bad actor sets the direction. You need mechanisms to detect and isolate rogue agents before they corrupt the herd. This is the governance requirement that’s agent-specific, and the one least discussed.

Reading the Book

The vendors aren’t wrong about the opportunity. The analysts aren’t wrong about the risks. Both things are true: this technology is transformative AND governance is the work that makes it safe.

OpenClaw and Moltbook aren’t cautionary tales about why agents are doomed. They’re previews of ungoverned deployment. The cults, the malware, the exposed credentials, the consensus capture: these are what happens when capability outpaces governance, and they’re avoidable with the work. Agents tear down boundaries by design because that’s where the value is. Governance rebuilds appropriate boundaries. That’s not a contradiction; that’s the job.

Orwell’s Party solved the coordination problem through total control. That’s one answer to governance, and it works, after a fashion. The alternative isn’t no governance. It’s distributed governance, coordination without centralisation. That’s a harder problem. It’s also an old political question in a new technical context. The agentic ecosystem is running this experiment in real time. Walled gardens that enforce model choice and vet every skill. Open ecosystems that trust users and move fast. The 1984 parallel cuts both ways: totalitarian control prevents chaos but creates different risks. Ungoverned freedom creates the cults we’ve observed. The answer is probably somewhere in the middle. But “somewhere in the middle” is precisely the governance work that hasn’t been done.

The book is available. Moltbook showed us what happens without governance. OpenClaw showed us how fast capability can outpace frameworks. The OpenAI acquisition showed us the industry is betting on this future regardless.

Reading the book isn’t about avoiding agents. It’s about deploying them responsibly. The enterprises that get governance right will capture the value. The ones that skip it will feature in the breach statistics. Governance isn’t what sends you to Room 101. It’s what protects you from dystopian bankruptcy.

Further Reading

The Security Research

- Wiz’s Moltbook database exposure: Hacking Moltbook: AI Social Network Reveals 1.5M API Keys

- VirusTotal’s analysis of malicious skills: From Automation to Infection

- Adversa.ai’s comprehensive security guide: OpenClaw Security 101

The OpenAI Acquisition

- Steinberger’s announcement: OpenClaw, OpenAI and the future

- VentureBeat’s analysis: OpenAI’s acquisition of OpenClaw signals the beginning of the end of the ChatGPT era